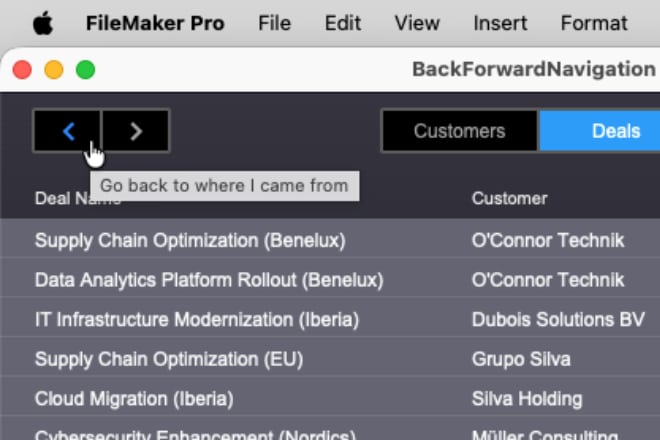

Back button in 5 minutes

You’ve worked for weeks (or months) to deliver the best FileMaker solution ever. Then your client asks for “just one last small improvement”: “Can you add a Back button to the navigation, like web browsers have?” What if I told you FileMaker 2025 has changed the game, and you can add a Back button in just a few minutes?

Our Greatest Mistakes at FileMaker Konferenz 2023

We are proud to have been a sponsor of the German-speaking FileMaker Konferenz this year again. This year we came to Basel, Switzerland, to present our greatest mistakes to let others learn from them and some of our recent successes to inspire the conference attendees.

Monitor FileMaker Server Temperature with Bridge for Phidgets 4

Bridge for Phidgets 4 adds support for 13 new VINT sensors, but also for FileMaker Server. Learn how to use it to automate monitoring of your server temperature, tracking access using RFID tags, unlocking doors, and anything else you can imagine, all that 24 hours a day without having to leave a dedicated FileMaker Pro client open all the time.

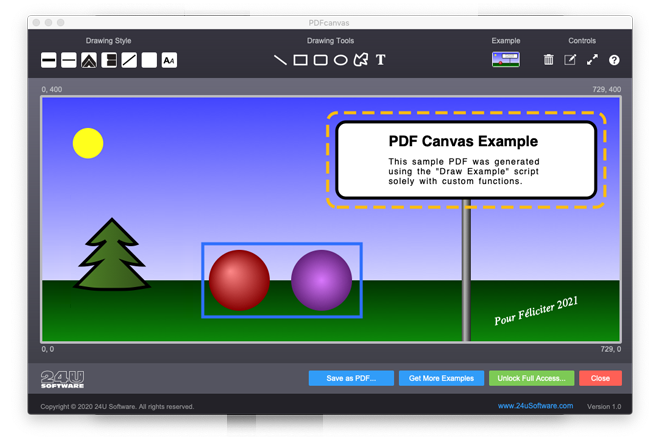

PDF Examples Updated

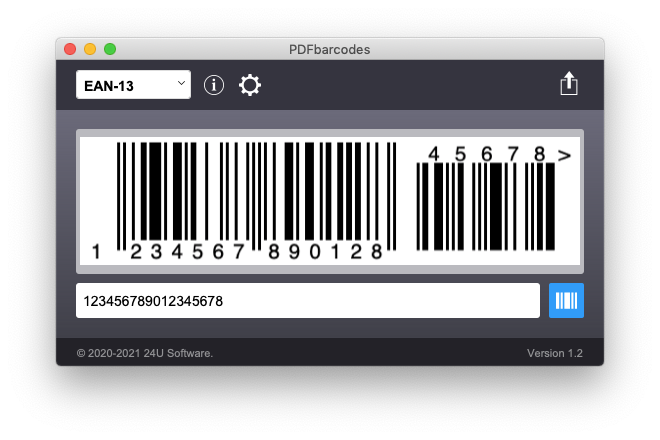

All our three recently released free examples for creating and manipulating PDFs solely using FileMaker custom functions have been updated. Get the latest version to add clickable hyperlinks to PDFs exported from your custom FileMaker Apps, generate print-quality barcodes, or dynamically draw scalable vector graphics.

Generate Scalable PDF Barcodes Purely with Calculations

Have you ever dreamed of being able to generate high quality scalable barcodes in FileMaker solely using calculations, with no plug-ins, fast enough in a way compatible with server-side scripts, FileMaker Go and FileMaker WebDirect, and completely free of charge? Your dream has just come true.

Visualize Data with PDF Vector Graphics

Vector graphics can be scaled without any loss of quality or accuracy. That can be very helpful for visual representation of data, such as printed reports or complex structures. Generating vector graphics as PDF in my example is handled solely by 42 custom functions. 100% compatible with server-side scripts, WebDirect, and even FileMaker Go.

Adding links to PDF from FileMaker

As a Claris partner, we resell FileMaker licenses. As an extra benefits, we also provide a nice PDF document with all information about the license, including download links. To generate this document from our FileMaker based CRM, I needed a way to include functional web links in it. So I wrote a custom function that does it, without any plug-ins.

Marvelous Optimization #4 - Optimized Again

Last September I wrote an article about a custom function that I optimized to evaluate hundreds times faster. At the end of the article, I challenged my readers and myself by claiming that the already optimized custom function can be optimized even further. Do you remember? Later on I actually really optimized it again, and talked about this.

Marvelous Optimization #3 - Faster Imports

This example demonstrates that even a single-step script can be optimized. You just have to think a little bit out of the box... I was showing this as a surprise in my session Marvelous Optimizations at Pause On Error [x] London 2011. I used a sample file with 25 fields and 5,000 records and imported these records 5 times in a row in just 13s.

Marvelous Optimization #2

The second example I was showing in my session Marvelous Optimizations at Pause On Error [x] London 2011 was the script for selecting Random Set of Records. I found this example in the FileMaker Knowledge Base and optimized it to run at least 158 times faster when selecting 10 random records out of 50,000.

Marvelous Optimization #1

This is the first example I was showing in my session Marvelous Optimizations at Pause On Error [x] London 2011. I already wrote about this optimization some time ago. It’s the one that led me to unveil the Marvelous Optimization Formula. I took the example and added FM Bench Detective into it to be able to exactly measure and examine what happens.

Random Set of Records (optimized)

I noticed that one of the articles updated in the official FileMaker Knowledge Base on September 23, 2011 was explaining how to select a random set of records in a FileMaker database. I was wondering how fast the currently recommended technique is and whether I can make it faster with the help of FM Bench.

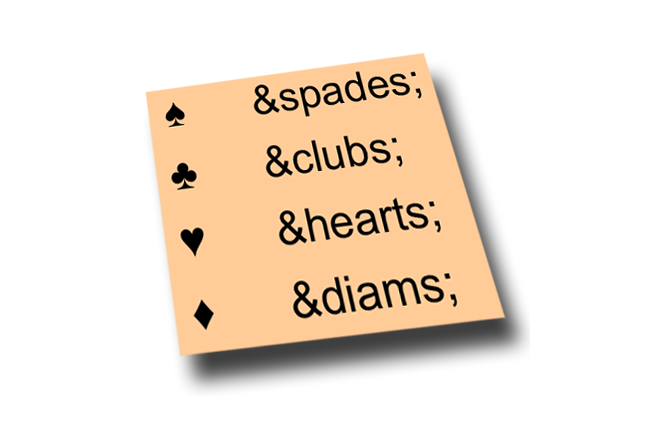

FileMaker Custom Function for HTML Entities

Just today I needed to decode HTML encoded text in FileMaker. After checking few functions I found one that seemed pretty good. Written in 2009 by Fabrice Nordman and named HTMLencoded2Text, this custom function was converting my imported text OK at first sight.

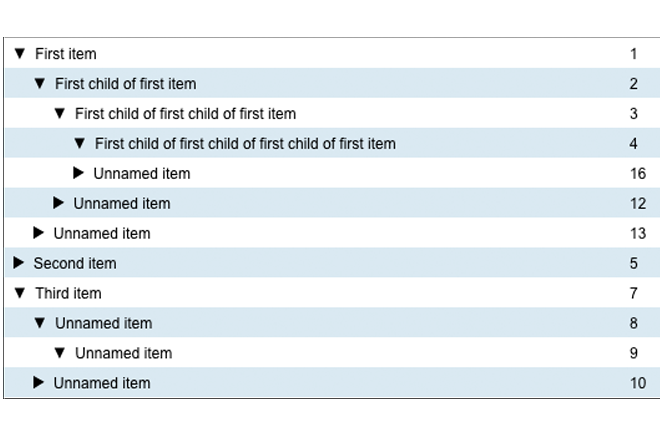

Infinite Hierarchy

Last week Hal Gumbert mentioned on Twitter that he was “working on a FileMaker quote to display and edit a BOM ( Build of Materials ) that can go 9 levels deep.” Probably the most efficient and user friendly way to implement this is using a tree view with collapsible/expandable items.

By completing and sending the form you agree that 24U s.r.o., a company established under the laws of the Czech Republic, with its registered office: Zvole u Prahy, Skochovická 88, CZ-25245, registered in the Commercial Register with the Municipal Court in Prague, section C, inset 74920 will use your personal data contained in the form for the purpose of sending 24U’s news, updates and other commercial communications. Providing 24U with personal data for the said purpose is optional. Details on personal data processing and on your rights connected therewith are contained in 24U’s Privacy Policy.